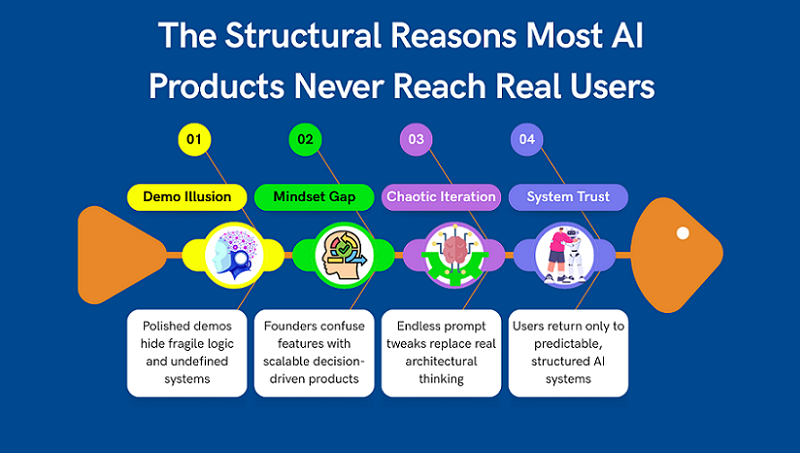

Today, creating an AI product is technically easier than ever. Models are readily available, no-code tools lower the barrier to entry, and examples of successful launches are constantly appearing in the news feed. But the paradox is that most AI products never reach real users. They don’t fail because of bad code or a weak model—they fail much earlier.

Most often, the problem lies in the founder’s mindset and how they understand the word “product.” Many launch a demo, wrap it in a beautiful interface, and call it a service. The first tests go well, friends say “wow,” but then something goes wrong. Users don’t return, the scenarios break, and any improvements turn into chaotic prompt edits. It feels like “the AI is acting weird,” when in fact, it’s the system itself that’s acting weird.

In this article, we’ll explore why AI products don’t reach the point of real use, where exactly they break down, and what mistakes are repeated over and over again. Without technical jargon, we’ll use product logic.

This article isn’t about models or tools. It’s about why good ideas don’t become products, and how to distinguish a temporary demo from a trustworthy system.

If you’re building an AI service, micro-SaaS, or a no-code product, you’ll almost certainly recognize yourself here. And that’s good: it means the problem can still be fixed.

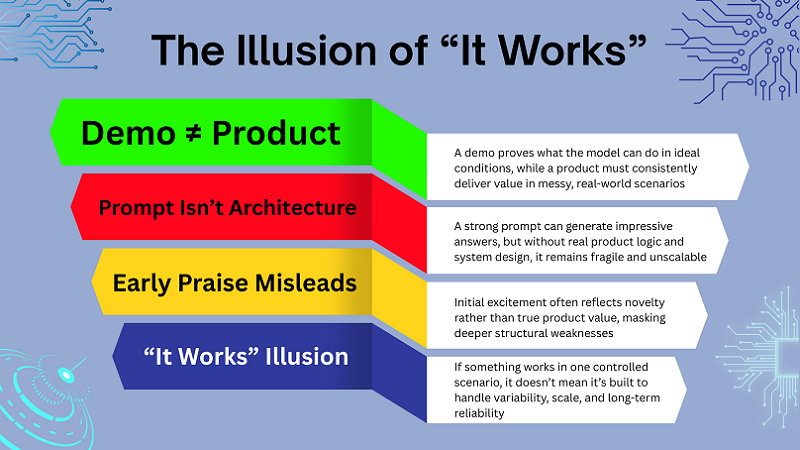

1. Mistaking a Demo for a Product

Most AI projects fail not at the scaling stage, but much earlier—when a demo is mistaken for a product. A demo demonstrates what the model can do, but the product is responsible for delivering consistent user experiences. These are fundamentally different things, yet they are often confused.

In a demo, everything works under ideal conditions: one scenario, one type of request, minimal context. In reality, users act chaotically, ask questions incorrectly, and expect predictable results.

When there’s no system in place, any deviation from the “ideal case” begins to break the product. And instead of scaling, the founder begins endlessly fixing prompts.

The problem is compounded by the fact that a demo is easy to sell to oneself. It looks smart, provides attractive answers, and creates the illusion of readiness. But it’s precisely this illusion that most often kills a product.

In this section, we’ll explore the line between a demo and a product and why it’s so important to recognize it as early as possible.

A Prompt Is Not a Product

One of the most common mistakes is thinking that a good prompt is already a product. Yes, a well-thought-out prompt can provide impressive answers, especially at the start. But at its core, it’s just an instruction for a model, not product logic.

A prompt doesn’t make decisions, doesn’t manage context, and doesn’t understand the user’s goal. It simply reacts to input. As soon as the scenario becomes more complex, the prompt begins to crumble.

A real product knows what it does, why it does it, and what state the user is in. If everything rests on one big prompt, the system becomes fragile and unpredictable. This is why products built solely on prompts don’t scale well and require constant manual intervention. This isn’t architecture—it’s a temporary construct.

Why Early Praise Is Misleading

Almost every AI founder has encountered this: early users say the product is “really cool.” It’s nice, but dangerous. Early feedback often evaluates the quality of responses rather than the product’s value.

People are impressed that the AI understands anything at all and responds coherently. But that doesn’t mean they’re ready to use the product regularly or pay for it.

This kind of feedback rarely reveals where the system breaks down in real-world use. It doesn’t identify problems with logic, context, or repeatability.

As a result, the founder begins to optimize what they already like and ignores structural weaknesses. These only surface later, when fixing them becomes expensive.

When “It Works” Actually Means “It Breaks Later”

In AI products, the phrase “everything works for me” almost always means “it works in one scenario.” This is the most dangerous point, as it creates a false sense of readiness.

As soon as new user types, different goals, or non-standard requests appear, the system begins to behave unpredictably. Responses contradict each other, logic is lost, and trust declines.

The problem isn’t with the model or the API. The problem is that the product wasn’t designed to handle variability.

Scaling in this case doesn’t break the product—it simply reveals the errors inherent in the very beginning. And that’s why it’s so important to distinguish between “it works now” and “it could work stably.”

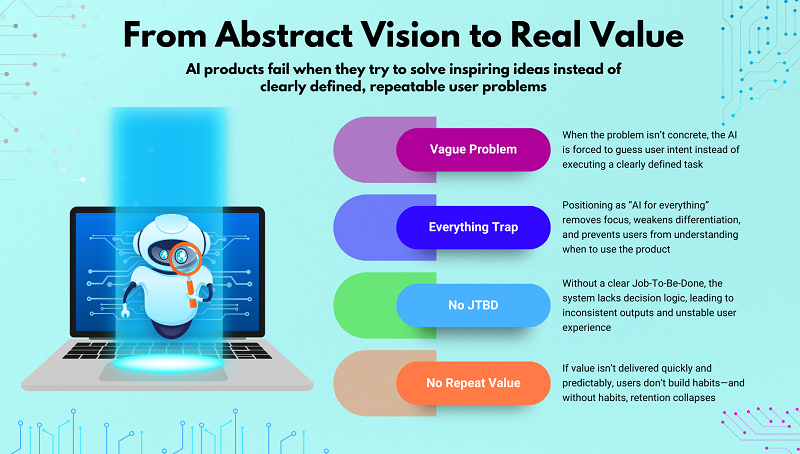

2. Solving Abstract Ideas Instead of Concrete Problems

One of the key reasons AI products fail to reach real users is the attempt to solve an abstract idea instead of a concrete task. Founders often start with an inspiring description but not a clear problem statement. Under these conditions, the AI is forced to “guess” what is expected of it rather than perform a specific task.

At the start, this may seem normal, especially if the initial model responses are impressive. But as usage increases, inconsistencies, contradictions, and instability begin to emerge. The user perceives this as a “raw” product, even if they can’t explain why.

Abstract formulations don’t provide the system with a basis for decision-making. As a result, the product doesn’t scale and doesn’t become part of the user’s daily workflow. This is where many AI projects lose their chance to move from experimentation to production.

“AI That Helps With Everything” Trap

The promise of “AI that helps with everything” almost always works against the product. The user doesn’t understand the specific scenario in which the service will be useful. Without a clear focus, the product doesn’t set expectations and doesn’t reinforce behavior.

Such solutions often turn into a one-size-fits-all chat that “can do everything” but doesn’t solve anything well enough.

The user tries it a couple of times and never returns because they don’t see any specific value.

Broad positioning also complicates product development: each new improvement pulls it in a different direction. As a result, the team loses focus, and the system loses stability.

No Clear Job-To-Be-Done

When it’s unclear what work AI performs for the user, the product begins to break down internally. The system doesn’t understand which decisions are a priority and which are secondary. This leads to inconsistent results and unstable behavior.

A clear Job-To-Be-Done defines the framework for logic, context, and UX. Without it, each request becomes a separate experiment. The user is forced to constantly clarify, correct, and monitor the result.

Under such conditions, AI doesn’t reduce the workload; on the contrary, it creates additional work. This quickly destroys trust in the product.

Why Users Don’t Return

Users only return to products where value is felt quickly and repeatably. If the result is different every time, trust doesn’t develop. Even good responses don’t compensate for the lack of consistency.

An abstract task doesn’t allow for a predictable experience. Users can’t integrate the product into their workflow. As a result, the service remains “interesting,” but not essential. When value isn’t cemented into habit, the product loses users even before it reaches the growth stage. The problem here isn’t marketing, but the initial problem.

If you’re at the very beginning and still defining what problem your AI product should solve, start here:

Day 1 — Where to Find Great SaaS Ideas (and How to Vet Them) It walks through how to identify concrete, monetizable problems instead of abstract ideas — and how to validate them before building anything.

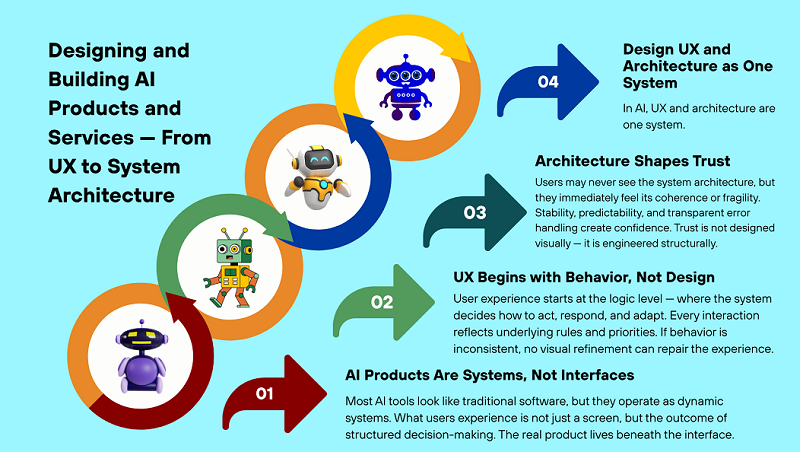

3. Building Screens Before Systems

The second common mistake is starting with the interface, not the system. Many AI products look beautiful, but lack clear logic underneath. This is especially common in no-code environments, where screens are assembled faster than product decisions are made.

Focusing on the UI creates the illusion of progress. The product seems almost ready because it has buttons, forms, and scenarios. But beneath the surface, a lack of structure lurks.

When the user begins using the product in real-world conditions, the system can’t handle the load. Errors appear suddenly and are difficult to fix without reworking the entire logic. As a result, a beautiful interface becomes a mask for a fragile product.

UI-First Thinking in No-Code Tools

No-code tools simplify interface creation, but they increase the focus on screens. Founders begin to think in terms of “page,” “form,” and “button” rather than “decision” and “logic.”

This leads to the product being designed as a set of screens rather than as a decision-making system. In this approach, AI is simply inserted into the UI, without understanding its role.

As a result, the system becomes dependent on the interface, not vice versa. Any change to the flow requires manual edits and complicates product development.

When UX Hides Broken Logic

Good design can temporarily hide problems in logic. The user feels the product is “beautiful,” but over time, they begin to notice strange behavior. Responses contradict each other, the system forgets the context, and decisions appear random.

In the early stages, this is often attributed to “AI quirks.” But the real problem is the lack of a clear structure. UX can’t compensate for a weak system.

When logic breaks down, no interface can save the user’s trust. They simply stop using the product.

Why Systems Scale, Screens Don’t

Interfaces don’t scale on their own. Only the logic behind them scales. If the system understands what to do and why, the interface can be changed painlessly.

When logic is hardwired into screens, every change becomes a risk. The product becomes fragile and poorly adapts to growth.

This is why sustainable AI products are built as systems, with the interface merely as a way to interact with them. This is the fundamental difference between a demo and a real product.

If you’re trying to understand what it actually means to build AI as a system — not just a set of prompts behind a UI — this is explored in depth in How to Build Scalable AI Products Without Code (Using ChatGPT as the Core Layer).

It breaks down how to structure decision logic, context layers, and product architecture so the system stays stable even as usage grows.

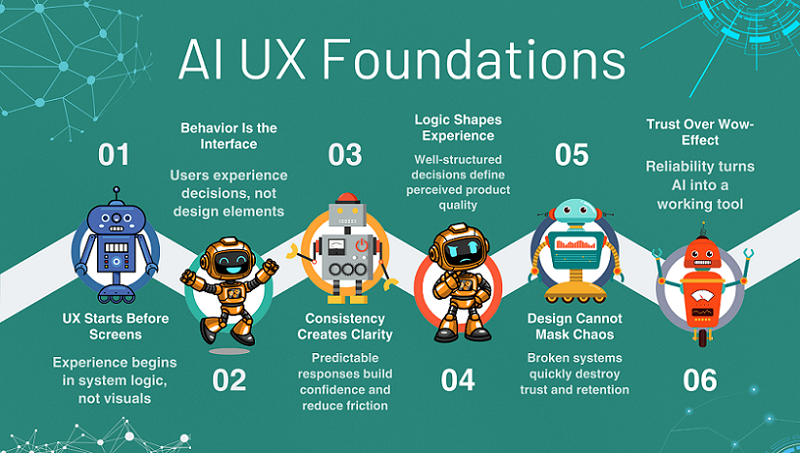

4. Ignoring Context as a Core Product Layer

One of the most underestimated reasons for the failure of AI products is ignoring context as a fully-fledged product layer. Many founders consider context to be secondary: “we’ll add memory later,” “this can be solved with a prompt.” In practice, it is context that determines whether a product feels intelligent or useless.

When AI starts from scratch every time, the product loses consistency, predictability, and trust. The user is forced to repeat the same things, clarify goals, and correct answers. This may be unnoticeable in early demos, but in real use, the problem immediately becomes apparent.

Context is not a technical detail, but product logic: what the system knows about the user, the process, and the current state of the task. Without it, AI remains a response generator, not part of the service. This is precisely why products without context rarely reach regular use. They may impress, but they don’t retain users.

Treating Every Request as Isolated

When each request is treated as a separate event, the AI loses the sense of continuity. The product doesn’t “understand” what came before and doesn’t know where to lead the user next. As a result, responses may be formally correct, but contextually useless.

The user feels like they’re interacting not with the system, but with disjointed responses. This undermines the sense of intelligence and reduces the product’s value after just a few sessions. This approach may work in tests, but quickly breaks down in a real-world scenario.

Isolated requests are a quick path to frustration because the product doesn’t evolve with the user. It simply reacts, but doesn’t support it.

What Users Expect the Product to Remember

Users don’t think in terms of “memory” or “state.” They simply expect the product to remember their goal, previous steps, and constraints. This is a basic expectation shaped by other digital services.

When AI forgets what the user has already explained or selected, a sense of chaos ensues. People have to waste time repeating themselves instead of moving forward. In SaaS products, this is perceived as poor UX, not an “AI feature.”

Context allows a product to be consistent, not just clever in its own words. This is why memory is not a feature, but a foundation.

Context Loss as a Trust Killer

Trust in an AI product is built on the feeling that the system understands the user. When context is lost, this trust vanishes instantly. Even one glitch can call the entire product into question.

The user begins to double-check answers, doubt recommendations, and spend more time than it saves. At this point, the product ceases to be a helper.

The most dangerous thing is that such glitches are perceived not as bugs, but as the product’s “stupidity.” And regaining trust after this is extremely difficult.

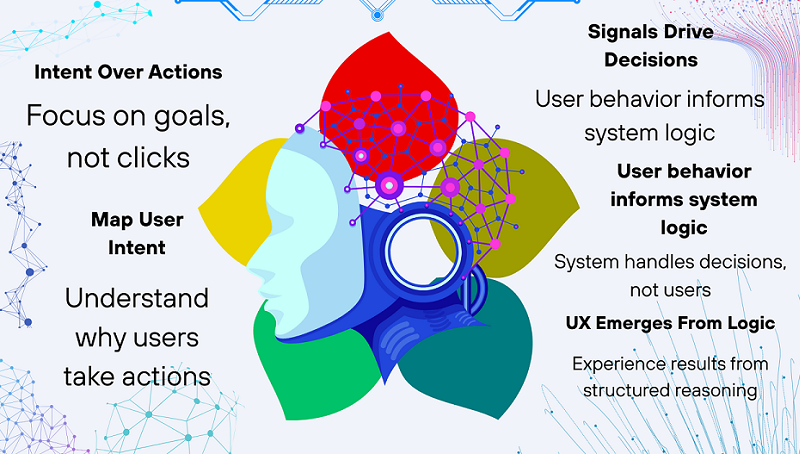

5. Confusing Generation With Real Value

Many AI products get stuck at the content generation level, mistaking it for the ultimate value. Texts, lists, and answers look impressive, but they don’t necessarily solve the user’s problem. This is the key mistake that prevents products from moving from interest to utility.

The user isn’t interested in the generation itself, but in the result: the decision, the choice, the next step. When a product simply “writes,” it shifts the bulk of the work onto humans. As a result, AI increases the volume of information without reducing the workload.

Real value arises when a product takes on some of the thinking. Without this, AI remains a tool, not a service. This is why generation without logic rarely leads to user retention.

Content ≠ Outcome

A well-written text doesn’t equal a solved problem. The user may receive the perfect answer but still be confused about what to do next. This creates the illusion of help without any real results.

AI founders often confuse the quality of generation with the value of a product. But in real life, it’s not the text that’s valued, but the action or decision it leads to.

If a product doesn’t lead the user to a result, it remains informational noise, albeit a beautiful one.

No Decision-Making Inside the Product

When AI doesn’t make decisions, it doesn’t take responsibility. It merely suggests options, leaving the entire cognitive load to the user. This approach quickly becomes tiring.

Product value emerges when the system itself selects, filters, and recommends. This isn’t about control, but about assistance.

Without integrated decision-making, AI remains an assistant, not a service. And assistants rarely become products worth paying for.

Why Users Feel Overloaded Instead of Helped

Paradoxically, many AI products actually make users more tired. Instead of saving time, they add new layers of choice and analysis.

When a product presents too many options without clear logic, it shifts the thinking onto humans. The user feels like they’re working in the system’s stead.

True help is simplification. If AI doesn’t do this, the product loses its meaning, even if it generates excellent solutions.

6. Avoiding Real Users for Too Long

One of the most common, yet rarely acknowledged, reasons for AI product failure is avoiding real users. Many teams spend years tinkering with a product, believing it’s not ready for release. As a result, the product exists only in the founder’s mind and in closed demos.

The problem is that without contact with reality, an AI system doesn’t receive the feedback it needs to grow. Errors go unnoticed, hypotheses go untested, and confidence in the product is built on assumptions.

This is especially dangerous for AI products, where system behavior only manifests itself in a variety of real-world scenarios. The longer the product is isolated from users, the more painful the launch moment is. And the higher the chance that users simply won’t see the value.

The “Not Ready Yet” Syndrome

The “not ready yet” syndrome seems rational, but in practice, it’s destructive. The founder convinces themselves that the logic, interface, or AI responses need some more refinement. In reality, this is often a fear of receiving negative feedback.

AI products don’t become “ready” in a vacuum. They only become sustainable through use. Every delayed release is a lost opportunity to uncover real problems.

As a result, the product either never launches or launches too late, when energy and focus have already been lost.

Building in Isolation

When a product is created without users, it develops in a closed system. All decisions are made based on assumptions, not behavior. This is especially dangerous for AI services, where the nuances of use are everything.

A founder may be confident that the product is logical and useful, but users think differently. Without real use cases, the system is optimized for an imaginary user.

As a result, upon first contact with the market, it turns out that the product solves the wrong problem or does so in an inconvenient way.

Why No-Code Doesn’t Remove This Fear

No-code lowers the technical barrier, but it doesn’t remove the psychological one. The ability to quickly build a product doesn’t mean you’re ready to share it with the world.

Many founders continue to endlessly “improve” the product, even when technical limitations are removed. The fear of evaluation remains the same.

Therefore, no-code is a tool for acceleration, but not a substitute for determination. Real progress begins only with the first users, not with the next update.

7. Scaling Before the Product Is Ready

Attempts to scale an AI product before it’s stable almost always end in failure. Growth amplifies everything: both strengths and weaknesses. If the system is unstable, scaling only accelerates decay.

Many founders begin thinking about metrics, automation, and growth without ensuring the product behaves predictably. As a result, problems that could have been fixed early on turn into systemic failures.

AI products are especially sensitive to this because their behavior depends on context, logic, and decisions. If these layers aren’t in place, growth becomes dangerous.

Premature Automation

Automating weak logic doesn’t make a product stronger—it makes problems happen faster. AI starts making mistakes more frequently, but on a larger scale.

Founders often automate processes that haven’t yet proven their resilience. As a result, the product loses flexibility and becomes more difficult to fix.

The right approach is to first ensure that the logic works in manual or semi-automated mode and only then scale.

Metrics Without Product Stability

Metrics can create the illusion of control. Increased traffic, requests, or sessions don’t mean the product is working properly.

If system behavior is unstable, the numbers only hide the real problems. Users may come, but they won’t stay.

Without robust product logic, analytics becomes noise, not a decision-making tool.

When Growth Exposes Structural Flaws

Growth doesn’t break a product—it reveals what’s already broken. Errors in logic, context, or decision-making become apparent precisely when the load increases.

What worked for ten users can completely fall apart for a hundred. And that’s okay—if you’re prepared.

The problem arises when growth starts too early, and the team doesn’t understand what exactly needs to be fixed.

Final Thoughts — AI Products Fail Because of Thinking, Not Technology

Most AI products fail to reach real users not because of models, APIs, or a lack of code. They fail much earlier — at the thinking level.

Founders confuse demos with products, generation with value, and interfaces with systems. They avoid users, fear the release, and try to scale before the product is sustainable.

AI products require a different approach: systemic, consistent, and solution-oriented, not answer-oriented.

The context, logic, and responsibility of the system are more important than any prompts or UI effects.

A real product begins when AI takes over some of the thinking, not just writing text.

This is what distinguishes a service that is used from a tool that is quickly forgotten.

Understanding these mistakes is the first step to creating a sustainable AI product.

The solutions to these mistakes are discussed in detail in the pillar article on scalable, no-code AI products.